Investigating Manipulative Applications of Generative AI

With support from the Minderoo Foundation, Gradient Institute is exploring how bad actors could leverage the latest pre-trained language models for personalised persuasion and manipulation.

Our focus is on uncovering and illustrating ethical risks associated with this technology, contributing to a more informed and responsible technological landscape. We are consulting our network of experts as we formulate scenarios where bad actors use large language models for applications such as:

- Covertly collecting personal information by posing as a helpful assistant, exploiting trust to deceive individuals into sharing details unknowingly.

- Applying personalised persuasion techniques to endorse products or political views without revealing motives, tailoring messages to exploit the information asymmetry.

- Distorting perceptions government representatives hold of sentiment and the salience of voting issues in their electorate, thereby influencing policy decision-making and undermining the democratic process.

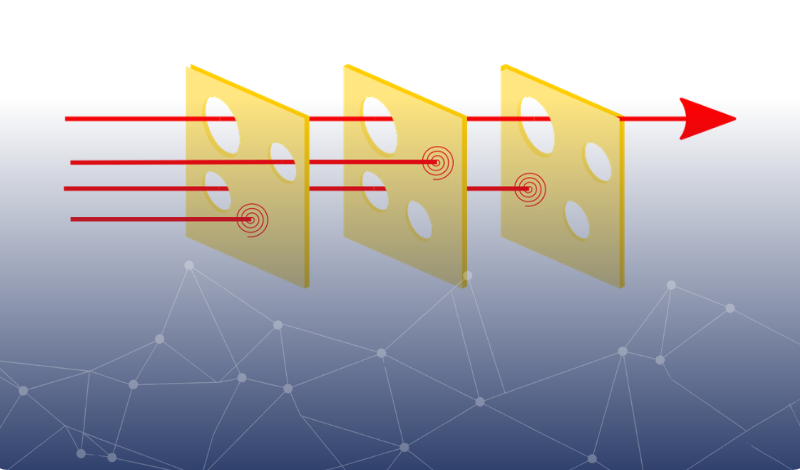

Moving beyond the theory, we are actively developing software to demonstrate the risk viability, providing an interactive experience for senior decision-makers in government and industry. Our ultimate aim is to equip them with the necessary knowledge to prompt a thoughtful reassessment of risks, practices, or legislative imperatives as they navigate the dynamically evolving AI ecosystem.